The Art of Invisible Design: When Technology Fades into Experience

The best interfaces are the ones you don't remember using.

There is a moment in every great piece of design when the tool disappears. The pen stops feeling like a pen and becomes an extension of thought. The dashboard stops feeling like software and becomes a window into understanding. The interface stops feeling like an interface and becomes, simply, the work.

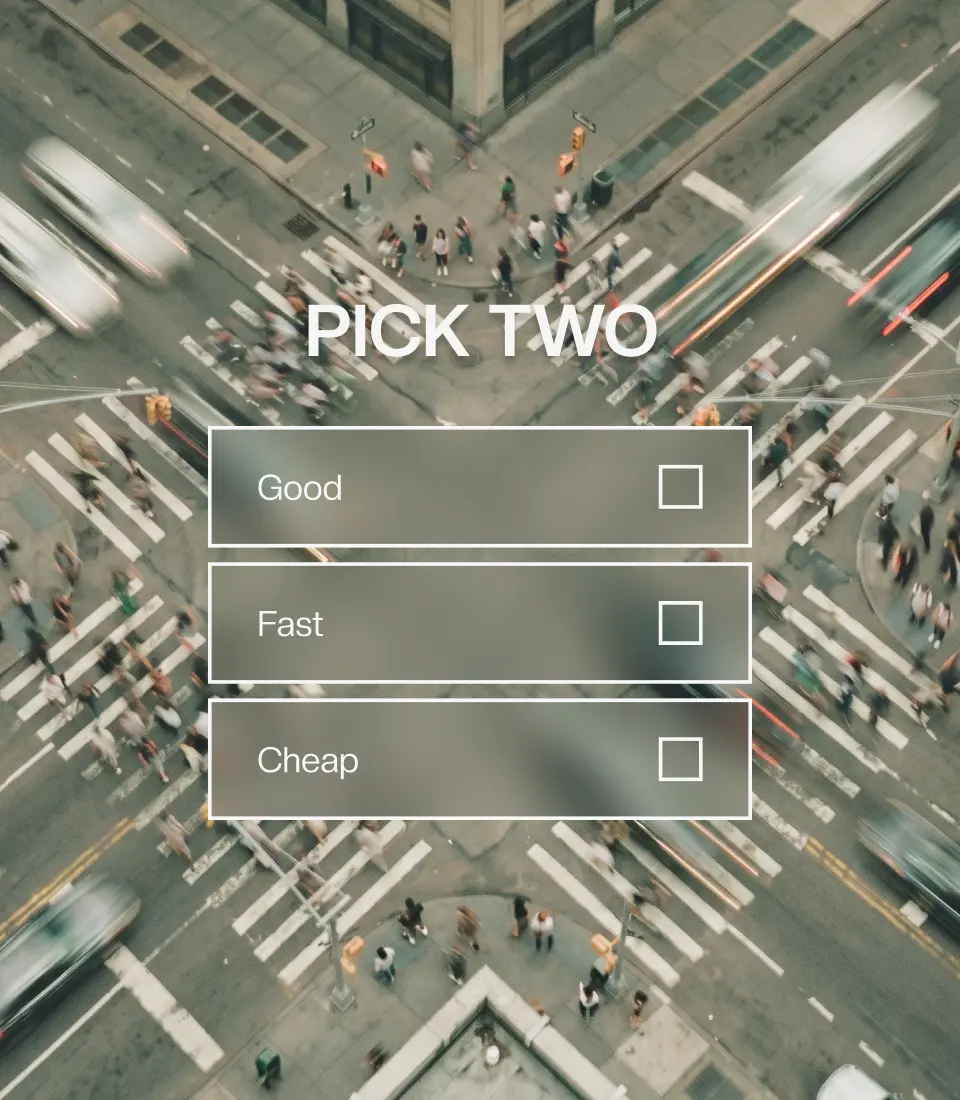

This is the paradox at the heart of excellent design: the more invisible it is, the more powerful it becomes. And yet the instinct with technology runs exactly the other way. We want to show what we built. We want users to feel the horsepower under the hood.

Invisible design resists that impulse. It argues that the measure of a well-designed system isn't how impressive it seems, but how completely it steps out of the way.

Friction is the enemy of thought

Every time a user has to think about where to click, what a button does or why the layout shifted, they're spending cognitive capital they should be spending on their actual work. The interface has inserted itself between the person and the outcome and made itself visible in exactly the wrong way.

Invisible design isn't about minimalism for its own sake. It isn't about stripping things bare until they feel austere. It's about something more precise: removing friction between intention and outcome. When technology truly fades into experience, users stop asking "how do I use this?" and simply accomplish what they set out to do. The interface becomes transparent.

The goal isn't "look at our amazing technology." It's "look how easily you accomplished your goal."

Think about what happens when you use a keyboard shortcut you've deeply internalized — Cmd+Z before you even consciously register you want to undo something. The interface anticipated a need, placed the mechanism at the threshold of thought, and responded before the thought fully formed. That's the gold standard. That's what invisible design aspires to.

The paradox of complexity

The most sophisticated systems often feel the simplest. A Google search routes your query through extraordinary infrastructure: indexing, ranking algorithms, personalisation signals, real-time computation across distributed servers. Yet what you experience is a box and results. The complexity is entirely invisible. The experience is entirely frictionless.

This is harder than it looks. It requires teams willing to absorb enormous technical complexity on behalf of the user. To build sophisticated systems and then consciously, deliberately hide them. To resist the nearly irresistible urge to let the machinery show.

The discipline required is less about design skill and more about philosophy: whose experience matters? The answer should always be the user's. Every technical constraint, every impressive capability, every feature the team is proud of must be evaluated through the lens of: does exposing this help the person do their work better, or does it just make us feel clever?

When the interface adapts to you

AI introduces a new dimension to invisible design, and a new set of tensions.

For the first time, interfaces can learn. They can adapt to the user's patterns, preferences, and working style without being asked. Complexity levels can adjust and suggestions can surface at precisely the moment they're useful. The system can meet the user where they are rather than forcing the user to meet the system.

But there's a danger lurking inside that possibility. Invisible adaptation can erode the sense of agency. If the system is constantly shifting around you by personalising, predicting, rearranging, you may start to feel like you've lost your bearings. The interface has become invisible, yes, but so has your own control over it.

The art is in calibrating when to be invisible and when to surface. When does the AI act silently on your behalf, and when does it pause to show its reasoning? When do invisible defaults serve you, and when do you need to know there's a choice being made?

The human moment

There's a deeper question here that matters especially for AI-augmented systems: what should a human actually see?

Human-in-the-loop design requires a clear theory of the human moment – the specific, intentional points where human judgment genuinely matters and must be surfaced, even at the cost of invisibility. Some decisions need to be visible by design. The AI does the heavy lifting in the background; the human is present for the moments that require discernment, creativity, or accountability.

Good invisible design doesn't mean invisibilising the human. It means reserving the human's attention for what deserves it, and routing everything else beneath the surface. The interface becomes a kind of triage: what rises to the level of requiring a person's conscious engagement, and what can be handled quietly, reliably, in the background?

This is a harder editorial problem than it might seem. It requires deep honesty about what decisions actually need human judgment versus what decisions we expose because we're uncertain, risk-averse, or simply haven't done the work of automating them well.

Four principles of invisible design

Restraint. Resist the urge to expose every capability. The feature that goes unused because it's invisible is still doing its job, it’s just doing it quietly.

Deep research. You can only hide friction you understand. Real invisible design comes from knowing the cognitive flow of actual work. It’s essential to not just know the task list, but the thinking behind it.

Confidence. If users don't notice your design, it may feel like a failure, but in reality, it's a success. The absence of feedback is itself a signal that the thing worked.

Continuous refinement. Tiny frictions accumulate. The small inconsistency, the slightly delayed response, the redundant confirmation — each one is a reminder that technology is present. Remove them relentlessly.

Building for invisibility from the ground up

At Radiance, invisibility is the design principle we build from. The Source® is built around the conviction that the power of AI should be felt, not seen. Because the problem AI created isn't just speed. It's sameness. Tools that produce volume but lose memory, brand intelligence that resets with every new project, strategy that lives in decks and dies there.

The Source® absorbs that complexity rather than exposing it by ingesting your brand assets, strategy, research, and past decisions, it builds a living foundation that gets sharper with every project. AI surfaces insights grounded in your actual material. Human judgment stays in the loop for the decisions that deserve it and everything else happens beneath the surface, reliably and without fanfare.

The measure is how completely The Source® recedes into the experience of doing excellent creative work, and how rarely you find yourself thinking about the tool rather than what you made with it.

As AI systems become more capable, the interface designer's job isn't to showcase that capability. It's to direct it. To build systems that absorb the weight of accumulation, retrieval, and context and return that time and attention to the human in the loop. Not necessarily so the person forgets the technology is there, but so they're free to do what only they can: bring judgment, taste, and creative instinct to work that actually matters.

The highest compliment invisible design can receive is silence. Because the interface did exactly what it was supposed to do: it got out of the way.

The best technology you've ever used is probably the technology you've forgotten about most completely.

(00)

bright insights

(Bright Insights)

Different, by design.